Here’s some of these benefits: Improving industrialization of development processes, enabling bigger projects, better alignment with the methodologies and tools recommended by the company’s IT, easier integration with the version control systems, test-driven approach more natural, and so on… Let’s also note that for developing on a Spark cluster with Hadoop YARN, a notebook client-server approach (e.g: like with Jupyter and Zeppelin notebook servers) forces developers to depend on the same YARN configuration which is centralized on the notebook server side. In addition of using a web-based notebook development environment, there are many benefits for them for also developing with an IDE like Eclipse.

CONFIGURE ECLIPSE FOR PYTHON ON MAC CODE

Thus in a same web-based Python Notebook project (e.g: Jupyter), those Data Scientists may execute some cells of code vertically on the Notebook server, and also other cells of code horizontally on a Spark cluster.īut in a general way, what about if Data Scientists want their new projects in Python to be more industrial ? However, Spark SQL with the DataFrames and Spark Machine Learning enable Data Scientists who want to develop in Python of increasing their program’s performances using a cluster. Python is one of the most famous programming language used by Data Scientists who develop programs in order to process Feature Engineering and Machine Learning algorithms by using rich APIs like Scikit-Learn and Pandas on a single multi-cores server. Step 11: Deploying your Python-Spark application in a Production environment Introduction

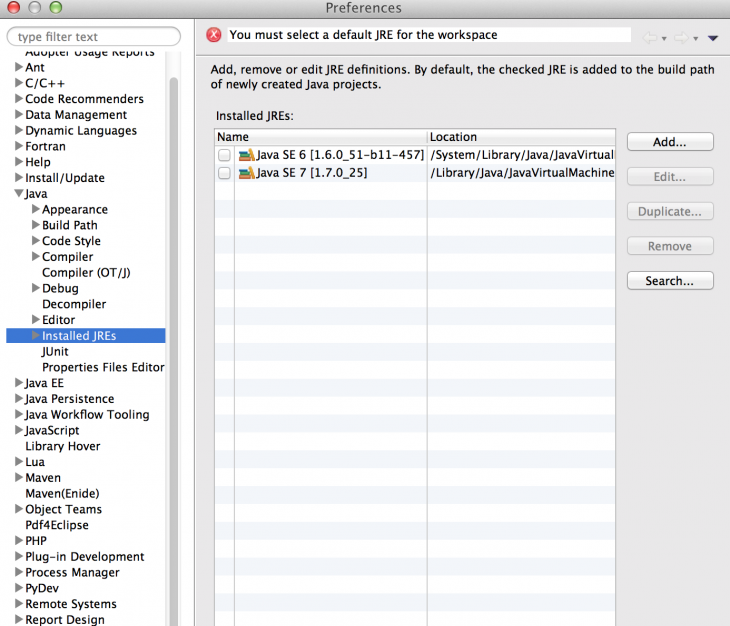

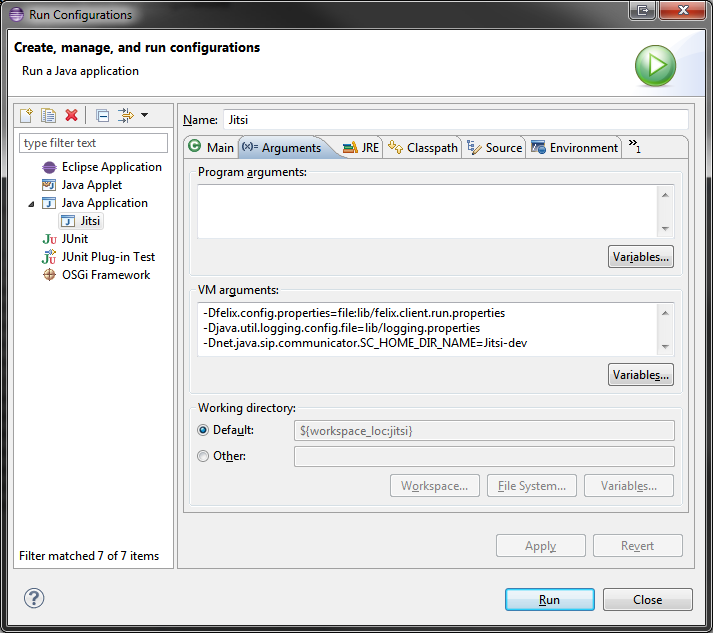

Step 10: Executing your Python-Spark application on a cluster with Hadoop YARN Step 9: Reading a CSV file directly as a Spark DataFrame for processing SQL Step 8: Executing your Python-Spark application with Eclipse Step 7: Creating your Python-Spark project “CountWords” Step 6: Configuring PyDev with Spark’s variables Step 4: Configuring PyDev with a Python interpreter Let’s have a look under the hood of PySpark